Evolving consumer demands and cloud native tools are driving a new wave of business innovation. We develop custom software that helps organizations make bold impact in their marketplace.

- Bolster brand position

- Connect people to information and data, faster

- Delight end users and drive more value

- Maximize your data investment

- Meet evolving consumer demands

- Protect market share

71%

of IT decision-makers from midmarket and enterprise companies expect to develop and deploy cloud-native applications in 2023

Why Choose Core BTS for your Modern Application Development?

Innovation

Creativity, technical expertise, and breadth of experience come together to deliver innovative solutions.

Modernization

Modern architectures unleash new capabilities, including the ability to adapt quickly to market demands.

Ability to Deliver

Clients trust us to deliver robust, secure, quality software on-time and in-budget.

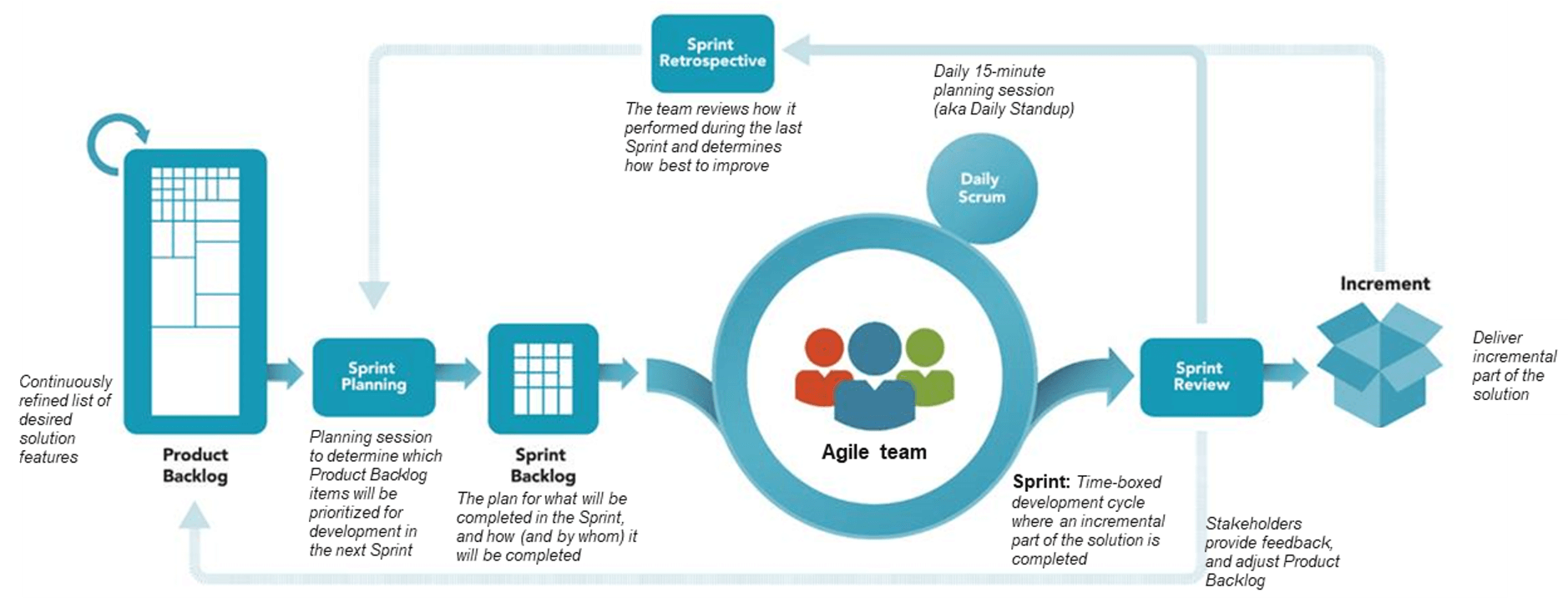

Agile

We deliver software iteratively, with full transparency and with the ability to adapt.

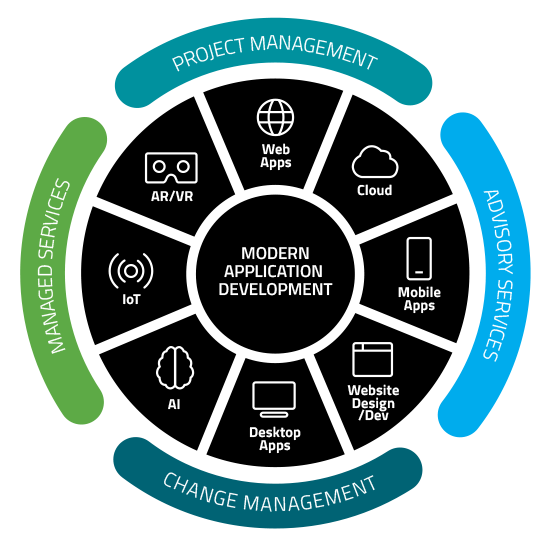

Delivering the Full Spectrum of Modern Applications

(with built-in security, cloud enablement, and project management)

End-to-End Enterprise-Level Cloud App Modernization

Your business has a lot of apps. Do you containerize them? Refactor them? Rewrite them? Rehost them? The answer could make all the difference to your bottom line. We will consult with you to determine what stage of the cloud journey you’re in and which of the following app modernization offerings will drive the most value for your organization:

Web Applications

We can deliver a performant, secure, and scalable web app that integrates with your existing environments for a hybrid – yet still modern – solution with an enjoyable user experience. If rehosting is needed, we can move your application from its current environment to newer infrastructure or to the cloud.

Technical capabilities include React, Angular, Blazor, Vue.js, and more.

Cross-Platform Mobile Applications

We can deliver everything from a marketing app to a complicated, scalable, and data-intensive business app. We handle the entire lifecycle of your mobile application including design, usability testing, technical architecture and development, integration with back-end systems, deployment, and ongoing support. We can deliver solutions for iOS, Android, and Windows operating systems. And we can build your app in Azure to take advantage of its many features like auto-scaling.

Technical capabilities include Xamarin, React Native, Flutter, and more.

API Development & Integration

Well-architected APIs are key to maximizing your tech investment. We’re experienced with integrating custom and off-the-shelf APIs to give our clients improved performance, reliable scalability, and one source of truth.

Artificial Intelligence and Machine Learning

Whether you are looking to digitize documents with OCR and search, creating a bot for better user interaction, evaluating images in computer vision, or augmenting a system with machine learning, we provide the breadth and depth of AI / ML knowledge to help you succeed.

Technical capabilities include computer vision and speech, natural language processing, and more.

Our Agile Approach to Delivering Modern Applications

At Core, we create delivery teams to best serve our clients. When you entrust Core to lead a project, our agile approach includes budget, schedule, and scope discipline.

Azure Expertise

API Management

App Services

Sevice Bus

Logic Apps

Cosmos DB

Azure Functions

Azure Search

Azure Kubernetes

Data Factories

Azure SQL Database

Azure AD B2C

Azure Key Vault

Azure Technologies

Front End

Back End

Monitoring / Execution

Data / Storage

AI

Security

We are proficient in dozens of additional technologies

What Our Clients Say

Software Engineer Manager | Trek Bicycle